![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |

![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |

|

This version of this document is no longer maintained. For the latest documentation, see http://www.qnx.com/developers/docs. |

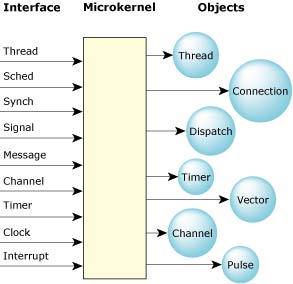

The QNX Neutrino microkernel, procnto, implements the core POSIX features used in embedded realtime systems, along with the fundamental QNX Neutrino message-passing services. The POSIX features that aren't implemented in the microkernel (file and device I/O, for example) are provided by optional processes and shared libraries.

|

To determine the release version of the kernel on your system, use the uname -a command. For more information, see its entry in the Utilities Reference. |

Successive QNX microkernels have seen a reduction in the code required to implement a given kernel call. The object definitions at the lowest layer in the kernel code have become more specific, allowing greater code reuse (such as folding various forms of POSIX signals, realtime signals, and QNX pulses into common data structures and code to manipulate those structures).

At its lowest level, the microkernel contains a few fundamental objects and the highly tuned routines that manipulate them. The OS is built from this foundation.

The QNX Neutrino microkernel.

Some developers have assumed that our microkernel is implemented entirely in assembly code for size or performance reasons. In fact, our implementation is coded primarily in C; size and performance goals are achieved through successively refined algorithms and data structures, rather than via assembly-level peep-hole optimizations.

Historically, the “application pressure” on QNX operating systems has been from both ends of the computing spectrum — from memory-limited embedded systems all the way up to high-end SMP (symmetrical multiprocessing) machines with gigabytes of physical memory. Accordingly, the design goals for QNX Neutrino accommodate both seemingly exclusive sets of functionality. Pursuing these goals is intended to extend the reach of systems well beyond what other OS implementations could address.

Since QNX Neutrino implements the majority of the realtime and thread services directly in the microkernel, these services are available even without the presence of additional OS modules.

In addition, some of the profiles defined by POSIX suggest that these services be present without necessarily requiring a process model. In order to accommodate this, the OS provides direct support for threads, but relies on its process manager portion to extend this functionality to processes containing multiple threads.

Note that many realtime executives and kernels provide only a nonmemory-protected threaded model, with no process model and/or protected memory model at all. Without a process model, full POSIX compliance cannot be achieved.

The QNX Neutrino microkernel has kernel calls to support the following:

The entire OS is built upon these calls. The OS is fully preemptible, even while passing messages between processes; it resumes the message pass where it left off before preemption.

The minimal complexity of the microkernel helps place an upper bound on the longest nonpreemptible code path through the kernel, while the small code size makes addressing complex multiprocessor issues a tractable problem. Services were chosen for inclusion in the microkernel on the basis of having a short execution path. Operations requiring significant work (e.g. process loading) were assigned to external processes/threads, where the effort to enter the context of that thread would be insignificant compared to the work done within the thread to service the request.

Rigorous application of this rule to dividing the functionality between the kernel and external processes destroys the myth that a microkernel OS must incur higher runtime overhead than a monolithic kernel OS. Given the work done between context switches (implicit in a message pass), and the very quick context-switch times that result from the simplified kernel, the time spent performing context switches becomes “lost in the noise” of the work done to service the requests communicated by the message passing between the processes that make up the OS.

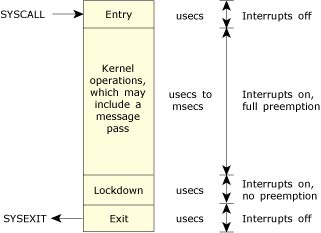

The following diagram shows the preemption details for the non-SMP kernel (x86 implementation).

QNX Neutrino preemption details.

Interrupts are disabled, or preemption is held off, for only very brief intervals (typically in the order of hundreds of nanoseconds).

When building an application (realtime, embedded, graphical, or otherwise), the developer may want several algorithms within the application to execute concurrently. This concurrency is achieved by using the POSIX thread model, which defines a process as containing one or more threads of execution.

A thread can be thought of as the minimum “unit of execution,” the unit of scheduling and execution in the microkernel. A process, on the other hand, can be thought of as a “container” for threads, defining the “address space” within which threads will execute. A process will always contain at least one thread.

Depending on the nature of the application, threads might execute independently with no need to communicate between the algorithms (unlikely), or they may need to be tightly coupled, with high-bandwidth communications and tight synchronization. To assist in this communication and synchronization, QNX Neutrino provides a rich variety of IPC and synchronization services.

The following pthread_* (POSIX Threads) library calls don't involve any microkernel thread calls:

The following table lists the POSIX thread calls that have a corresponding microkernel thread call, allowing you to choose either interface:

| POSIX call | Microkernel call | Description |

|---|---|---|

| pthread_create() | ThreadCreate() | Create a new thread of execution. |

| pthread_exit() | ThreadDestroy() | Destroy a thread. |

| pthread_detach() | ThreadDetach() | Detach a thread so it doesn't need to be joined. |

| pthread_join() | ThreadJoin() | Join a thread waiting for its exit status. |

| pthread_cancel() | ThreadCancel() | Cancel a thread at the next cancellation point. |

| N/A | ThreadCtl() | Change a thread's Neutrino-specific thread characteristics. |

| pthread_mutex_init() | SyncTypeCreate() | Create a mutex. |

| pthread_mutex_destroy() | SyncDestroy() | Destroy a mutex. |

| pthread_mutex_lock() | SyncMutexLock() | Lock a mutex. |

| pthread_mutex_trylock() | SyncMutexLock() | Conditionally lock a mutex. |

| pthread_mutex_unlock() | SyncMutexUnlock() | Unlock a mutex. |

| pthread_cond_init() | SyncTypeCreate() | Create a condition variable. |

| pthread_cond_destroy() | SyncDestroy() | Destroy a condition variable. |

| pthread_cond_wait() | SyncCondvarWait() | Wait on a condition variable. |

| pthread_cond_signal() | SyncCondvarSignal() | Signal a condition variable. |

| pthread_cond_broadcast() | SyncCondvarSignal() | Broadcast a condition variable. |

| pthread_getschedparam() | SchedGet() | Get the scheduling parameters and policy of a thread. |

| pthread_setschedparam() | SchedSet() | Set the scheduling parameters and policy of a thread. |

| pthread_sigmask() | SignalProcmask() | Examine or set a thread's signal mask. |

| pthread_kill() | SignalKill() | Send a signal to a specific thread. |

The OS can be configured to provide a mix of threads and processes (as defined by POSIX). Each process is MMU-protected from each other, and each process may contain one or more threads that share the process's address space.

The environment you choose affects not only the concurrency capabilities of the application, but also the IPC and synchronization services the application might make use of.

|

Even though the common term “IPC” refers to communicating processes, we use it here to describe the communication between threads, whether they're within the same process or separate processes. |

For information about processes and threads from the programming point of view, see the Processes and Threads chapter of Getting Started with QNX Neutrino, and the Programming Overview and Processes chapters of the Neutrino Programmer's Guide.

Although threads within a process share everything within the process's address space, each thread still has some “private” data. In some cases, this private data is protected within the kernel (e.g. the tid or thread ID), while other private data resides unprotected in the process's address space (e.g. each thread has a stack for its own use). Some of the more noteworthy thread-private resources are:

|

In Neutrino, processes don't have priorities; their threads do. |

For more information, see “Thread scheduling,” later in this chapter.

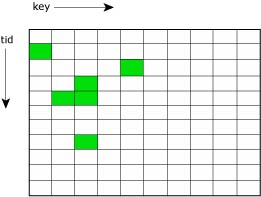

Thread-specific data, implemented in the pthread library and stored in the TLS, provides a mechanism for associating a process global integer key with a unique per-thread data value. To use thread-specific data, you first create a new key and then bind a unique data value to the key (per thread). The data value may, for example, be an integer or a pointer to a dynamically allocated data structure. Subsequently, the key can return the bound data value per thread.

A typical application of thread-specific data is for a thread-safe function that needs to maintain a context for each calling thread.

Sparse matrix (tid,key) to value mapping.

You use the following functions to create and manipulate this data:

| Function | Description |

|---|---|

| pthread_key_create() | Create a data key with destructor function |

| pthread_key_delete() | Destroy a data key |

| pthread_setspecific() | Bind a data value to a data key |

| pthread_getspecific() | Return the data value bound to a data key |

The number of threads within a process can vary widely, with threads being created and destroyed dynamically. Thread creation (pthread_create()) involves allocating and initializing the necessary resources within the process's address space (e.g. thread stack) and starting the execution of the thread at some function in the address space.

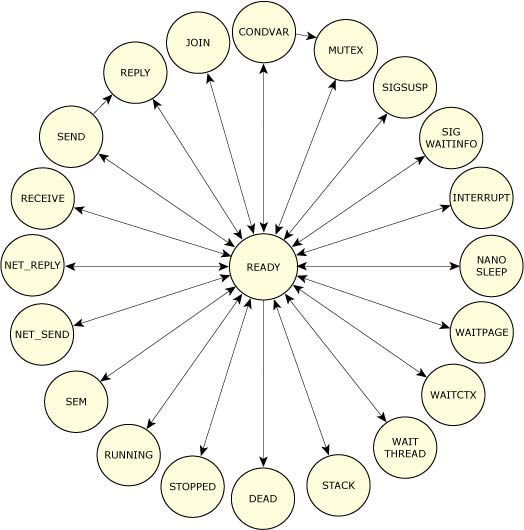

Thread termination (pthread_exit(), pthread_cancel()) involves stopping the thread and reclaiming the thread's resources. As a thread executes, its state can generally be described as either “ready” or “blocked.” More specifically, it can be one of the following:

Possible thread states.

The execution of a running thread is temporarily suspended whenever the microkernel is entered as the result of a kernel call, exception, or hardware interrupt. A scheduling decision is made whenever the execution state of any thread changes — it doesn't matter which processes the threads might reside within. Threads are scheduled globally across all processes.

Normally, the execution of the suspended thread will resume, but the thread scheduler will perform a context switch from one thread to another whenever the running thread:

The running thread is blocked when it must wait for some event to occur (response to an IPC request, wait on a mutex, etc.). The blocked thread is removed from the running array and the highest-priority ready thread is then run. When the blocked thread is subsequently unblocked, it's placed on the end of the ready queue for that priority level.

The running thread is preempted when a higher-priority thread is placed on the ready queue (it becomes READY, as the result of its block condition being resolved). The preempted thread is put at the beginning of the ready queue for that priority and the higher-priority thread runs.

The running thread voluntarily yields the processor (sched_yield()) and is placed on the end of the ready queue for that priority. The highest-priority thread then runs (which may still be the thread that just yielded).

Every thread is assigned a priority. The thread scheduler selects the next thread to run by looking at the priority assigned to every thread that is READY (i.e. capable of using the CPU). The thread with the highest priority is selected to run.

The following diagram shows the ready queue for five threads (B–F) that are READY. Thread A is currently running. All other threads (G–Z) are BLOCKED. Thread A, B, and C are at the highest priority, so they'll share the processor based on the running thread's scheduling algorithm.

The ready queue.

The OS supports a total of 256 scheduling priority levels. A non-root thread can set its priority to a level from 1 to 63 (the highest priority), independent of the scheduling policy. Only root threads (i.e. those whose effective uid is 0) are allowed to set priorities above 63. The special idle thread (in the process manager) has priority 0 and is always ready to run. A thread inherits the priority of its parent thread by default.

You can change the allowed priority range for non-root processes with the procnto -P option:

procnto -P priority

Here's a summary of the ranges:

| Priority level | Owner |

|---|---|

| 0 | Idle thread |

| 1 through priority − 1 | Non-root or root |

| priority through 255 | root |

Note that in order to prevent priority inversion, the kernel may temporarily boost a thread's priority. For more information, see “Priority inheritance and mutexes” later in this chapter, and “Priority inheritance and messages” in the Interprocess Communication (IPC) chapter.

The threads on the ready queue are ordered by priority. The ready queue is actually implemented as 256 separate queues, one for each priority. The first thread in the highest-priority queue is selected to run.

Most of the time, threads are queued in FIFO order in the queue of their priority, but there are some exceptions:

To meet the needs of various applications, QNX Neutrino provides these scheduling algorithms:

Each thread in the system may run using any method. The methods are effective on a per-thread basis, not on a global basis for all threads and processes on a node.

Remember that the FIFO and round-robin scheduling algorithms apply only when two or more threads that share the same priority are READY (i.e. the threads are directly competing with each other). The sporadic method, however, employs a “budget” for a thread's execution. In all cases, if a higher-priority thread becomes READY, it immediately preempts all lower-priority threads.

In the following diagram, three threads of equal priority are READY. If Thread A blocks, Thread B will run.

Thread A blocks; Thread B runs.

Although a thread inherits its scheduling algorithm from its parent process, the thread can request to change the algorithm applied by the kernel.

In FIFO scheduling, a thread selected to run continues executing until it:

FIFO scheduling.

In round-robin scheduling, a thread selected to run continues executing until it:

As the following diagram shows, Thread A ran until it consumed its timeslice; the next READY thread (Thread B) now runs:

Round-robin scheduling.

A timeslice is the unit of time assigned to every process. Once it consumes its timeslice, a thread is preempted and the next READY thread at the same priority level is given control. A timeslice is 4 × the clock period. (For more information, see the entry for ClockPeriod() in the Neutrino Library Reference.)

|

Apart from time slicing, round-robin scheduling is identical to FIFO scheduling. |

The sporadic scheduling algorithm is generally used to provide a capped limit on the execution time of a thread within a given period of time. This behavior is essential when Rate Monotonic Analysis (RMA) is being performed on a system that services both periodic and aperiodic events. Essentially, this algorithm allows a thread to service aperiodic events without jeopardizing the hard deadlines of other threads or processes in the system.

As in FIFO scheduling, a thread using sporadic scheduling continues executing until it blocks or is preempted by a higher-priority thread. And as in adaptive scheduling, a thread using sporadic scheduling will drop in priority, but with sporadic scheduling you have much more precise control over the thread's behavior.

Under sporadic scheduling, a thread's priority can oscillate dynamically between a foreground or normal priority and a background or low priority. Using the following parameters, you can control the conditions of this sporadic shift:

|

In a poorly configured system, a thread's execution budget may become eroded because of too much blocking — i.e. it won't receive enough replenishments. |

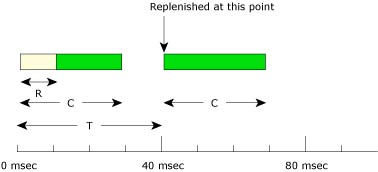

As the following diagram shows, the sporadic scheduling policy establishes a thread's initial execution budget (C), which is consumed by the thread as it runs and is replenished periodically (for the amount T). When a thread blocks, the amount of the execution budget that's been consumed (R) is arranged to be replenished at some later time (e.g. at 40 msec) after the thread first became ready to run.

A thread's budget is replenished periodically.

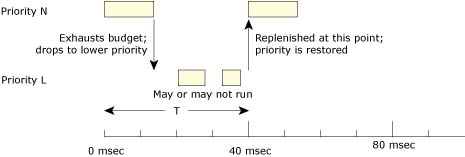

At its normal priority N, a thread will execute for the amount of time defined by its initial execution budget C. As soon as this time is exhausted, the priority of the thread will drop to its low priority L until the replenishment operation occurs.

Assume, for example, a system where the thread never blocks or is never preempted:

A thread drops in priority until its budget is replenished.

Here the thread will drop to its low-priority (background) level, where it may or may not get a chance to run depending on the priority of other threads in the system.

Once the replenishment occurs, the thread's priority is raised to its original level. This guarantees that within a properly configured system, the thread will be given the opportunity every period T to run for a maximum execution time C. This ensures that a thread running at priority N will consume only C/T percent of the system's resources.

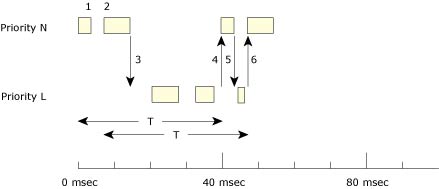

When a thread blocks multiple times, then several replenishment operations may be started and occur at different times. This could mean that the thread's execution budget will total C within a period T; however, the execution budget may not be contiguous during that period.

A thread oscillates between high and low priority.

In the diagram above, the thread has a budget (C) of 10 msec to be consumed within each 40-msec replenishment period (T).

And so on. The thread will continue to oscillate between its two priority levels, servicing aperiodic events in your system in a controlled, predictable manner.

A thread's priority can vary during its execution, either from direct manipulation by the thread itself or from the kernel adjusting the thread's priority as it receives a message from a higher-priority thread.

In addition to priority, you can also select the scheduling algorithm that the kernel will use for the thread. Although our libraries provide a number of different ways to get and set the scheduling parameters, your best choices are pthread_getschedparam() and pthread_setschedparam(). For information about the other choices, see “Scheduling algorithms” in the Programming Overview chapter of the QNX Neutrino Programmer's Guide.

Since all the threads in a process have unhindered access to the shared data space, wouldn't this execution model “trivially” solve all of our IPC problems? Can't we just communicate the data through shared memory and dispense with any other execution models and IPC mechanisms?

If only it were that simple!

One issue is that the access of individual threads to common data must be synchronized. Having one thread read inconsistent data because another thread is part way through modifying it is a recipe for disaster. For example, if one thread is updating a linked list, no other threads can be allowed to traverse or modify the list until the first thread has finished. A code passage that must execute “serially” (i.e. by only one thread at a time) in this manner is termed a “critical section.” The program would fail (intermittently, depending on how frequently a “collision” occurred) with irreparably damaged links unless some synchronization mechanism ensured serial access.

Mutexes, semaphores, and condvars are examples of synchronization tools that can be used to address this problem. These tools are described later in this section.

Although synchronization services can be used to allow threads to cooperate, shared memory per se can't address a number of IPC issues. For example, although threads can communicate through the common data space, this works only if all the threads communicating are within a single process. What if our application needs to communicate a query to a database server? We need to pass the details of our query to the database server, but the thread we need to communicate with lies within a database server process and the address space of that server isn't addressable to us.

The OS takes care of the network-distributed IPC issue because the one interface — message passing — operates in both the local and network-remote cases, and can be used to access all OS services. Since messages can be exactly sized, and since most messages tend to be quite tiny (e.g. the error status on a write request, or a tiny read request), the data moved around the network can be far less with message passing than with network-distributed shared memory, which would tend to copy 4K pages around.

Although threads are very appropriate for some system designs, it's important to respect the Pandora's box of complexities their use unleashes. In some ways, it's ironic that while MMU-protected multitasking has become common, computing fashion has made popular the use of multiple threads in an unprotected address space. This not only makes debugging difficult, but also hampers the generation of reliable code.

Threads were initially introduced to UNIX systems as a “light-weight” concurrency mechanism to address the problem of slow context switches between “heavy weight” processes. Although this is a worthwhile goal, an obvious question arises: Why are process-to-process context switches slow in the first place?

Architecturally, the OS addresses the context-switch performance issue first. In fact, threads and processes provide nearly identical context-switch performance numbers. QNX Neutrino's process-switch times are faster than UNIX thread-switch times. As a result, QNX Neutrino threads don't need to be used to solve the IPC performance problem; instead, they're a tool for achieving greater concurrency within application and server processes.

Without resorting to threads, fast process-to-process context switching makes it reasonable to structure an application as a team of cooperating processes sharing an explicitly allocated shared-memory region. An application thus exposes itself to bugs in the cooperating processes only so far as the effects of those bugs on the contents of the shared-memory region. The private memory of the process is still protected from the other processes. In the purely threaded model, the private data of all threads (including their stacks) is openly accessible, vulnerable to stray pointer errors in any thread in the process.

Nevertheless, threads can also provide concurrency advantages that a pure process model cannot address. For example, a filesystem server process that executes requests on behalf of many clients (where each request takes significant time to complete), definitely benefits from having multiple threads of execution. If one client process requests a block from disk, while another client requests a block already in cache, the filesystem process can utilize a pool of threads to concurrently service client requests, rather than remain “busy” until the disk block is read for the first request.

As requests arrive, each thread is able to respond directly from the buffer cache or to block and wait for disk I/O without increasing the response latency seen by other client processes. The filesystem server can “precreate” a team of threads, ready to respond in turn to client requests as they arrive. Although this complicates the architecture of the filesystem manager, the gains in concurrency are significant.

QNX Neutrino provides the POSIX-standard thread-level synchronization primitives, some of which are useful even between threads in different processes. The synchronization services include at least the following:

| Synchronization service | Supported between processes | Supported across a QNX LAN |

|---|---|---|

| Mutexes | Yes | No |

| Condvars | Yes | No |

| Barriers | No | No |

| Sleepon locks | No | No |

| Reader/writer locks | Yes | No |

| Semaphores | Yes | Yes (named only) |

| FIFO scheduling | Yes | No |

| Send/Receive/Reply | Yes | Yes |

| Atomic operations | Yes | No |

The above synchronization primitives are implemented directly by the kernel, except for:

|

You should allocate mutexes, condvars, barriers, reader/writer locks, and semaphores, as well as objects you plan to use atomic operations on, only in normal memory mappings. On certain processors (e.g. some PPC ones), atomic operations and calls such as pthread_mutex_lock() will cause a fault if the object is allocated in uncached memory. |

Mutual exclusion locks, or mutexes, are the simplest of the synchronization services. A mutex is used to ensure exclusive access to data shared between threads. It is typically acquired (pthread_mutex_lock() or pthread_mutex_timedlock()) and released (pthread_mutex_unlock()) around the code that accesses the shared data (usually a critical section).

Only one thread may have the mutex locked at any given time. Threads attempting to lock an already locked mutex will block until the thread that owns the mutex unlocks it. When the thread unlocks the mutex, the highest-priority thread waiting to lock the mutex will unblock and become the new owner of the mutex. In this way, threads will sequence through a critical region in priority-order.

On most processors, acquisition of a mutex doesn't require entry to the kernel for a free mutex. What allows this is the use of the compare-and-swap opcode on x86 processors and the load/store conditional opcodes on most RISC processors.

Entry to the kernel is done at acquisition time only if the mutex is already held so that the thread can go on a blocked list; kernel entry is done on exit if other threads are waiting to be unblocked on that mutex. This allows acquisition and release of an uncontested critical section or resource to be very quick, incurring work by the OS only to resolve contention.

A nonblocking lock function (pthread_mutex_trylock()) can be used to test whether the mutex is currently locked or not. For best performance, the execution time of the critical section should be small and of bounded duration. A condvar should be used if the thread may block within the critical section.

By default, if a thread with a higher priority than the mutex owner attempts to lock a mutex, then the effective priority of the current owner is increased to that of the higher-priority blocked thread waiting for the mutex. The current owner returns to its real priority when it unlocks the mutex. This scheme not only ensures that the higher-priority thread will be blocked waiting for the mutex for the shortest possible time, but also solves the classic priority-inversion problem.

The pthread_mutexattr_init() function sets the protocol to PTHREAD_PRIO_INHERIT to allow this behavior; you can call pthread_mutexattr_setprotocol() to override this setting. The pthread_mutex_trylock() function doesn't change the thread priorities because it doesn't block.

You can also modify the attributes of the mutex (using pthread_mutexattr_setrecursive()) to allow a mutex to be recursively locked by the same thread. This can be useful to allow a thread to call a routine that might attempt to lock a mutex that the thread already happens to have locked.

|

Recursive mutexes are non-POSIX services — they don't work with condvars. |

A condition variable, or condvar, is used to block a thread within a critical section until some condition is satisfied. The condition can be arbitrarily complex and is independent of the condvar. However, the condvar must always be used with a mutex lock in order to implement a monitor.

A condvar supports three operations:

|

Note that there's no connection between a condvar signal and a POSIX signal. |

Here's a typical example of how a condvar can be used:

pthread_mutex_lock( &m );

. . .

while (!arbitrary_condition) {

pthread_cond_wait( &cv, &m );

}

. . .

pthread_mutex_unlock( &m );

In this code sample, the mutex is acquired before the condition is tested. This ensures that only this thread has access to the arbitrary condition being examined. While the condition is true, the code sample will block on the wait call until some other thread performs a signal or broadcast on the condvar.

The while loop is required for two reasons. First of all, POSIX cannot guarantee that false wakeups will not occur (e.g. multiprocessor systems). Second, when another thread has made a modification to the condition, we need to retest to ensure that the modification matches our criteria. The associated mutex is unlocked atomically by pthread_cond_wait() when the waiting thread is blocked to allow another thread to enter the critical section.

A thread that performs a signal will unblock the highest-priority thread queued on the condvar, while a broadcast will unblock all threads queued on the condvar. The associated mutex is locked atomically by the highest-priority unblocked thread; the thread must then unlock the mutex after proceeding through the critical section.

A version of the condvar wait operation allows a timeout to be specified (pthread_cond_timedwait()). The waiting thread can then be unblocked when the timeout expires.

A barrier is a synchronization mechanism that lets you “corral” several cooperating threads (e.g. in a matrix computation), forcing them to wait at a specific point until all have finished before any one thread can continue.

Unlike the pthread_join() function, where you'd wait for the threads to terminate, in the case of a barrier you're waiting for the threads to rendezvous at a certain point. When the specified number of threads arrive at the barrier, we unblock all of them so they can continue to run.

You first create a barrier with pthread_barrier_init():

#include <pthread.h>

int

pthread_barrier_init (pthread_barrier_t *barrier,

const pthread_barrierattr_t *attr,

unsigned int count);

This creates a barrier object at the passed address (a pointer to the barrier object is in barrier), with the attributes as specified by attr. The count member holds the number of threads that must call pthread_barrier_wait().

Once the barrier is created, each thread will call pthread_barrier_wait() to indicate that it has completed:

#include <pthread.h> int pthread_barrier_wait (pthread_barrier_t *barrier);

When a thread calls pthread_barrier_wait(), it blocks until the number of threads specified initially in the pthread_barrier_init() function have called pthread_barrier_wait() (and blocked also). When the correct number of threads have called pthread_barrier_wait(), all those threads will unblock at the same time.

Here's an example:

/*

* barrier1.c

*/

#include <stdio.h>

#include <unistd.h>

#include <stdlib.h>

#include <time.h>

#include <pthread.h>

#include <sys/neutrino.h>

pthread_barrier_t barrier; // barrier synchronization object

void *

thread1 (void *not_used)

{

time_t now;

time (&now);

printf ("thread1 starting at %s", ctime (&now));

// do the computation

// let's just do a sleep here...

sleep (20);

pthread_barrier_wait (&barrier);

// after this point, all three threads have completed.

time (&now);

printf ("barrier in thread1() done at %s", ctime (&now));

}

void *

thread2 (void *not_used)

{

time_t now;

time (&now);

printf ("thread2 starting at %s", ctime (&now));

// do the computation

// let's just do a sleep here...

sleep (40);

pthread_barrier_wait (&barrier);

// after this point, all three threads have completed.

time (&now);

printf ("barrier in thread2() done at %s", ctime (&now));

}

int main () // ignore arguments

{

time_t now;

// create a barrier object with a count of 3

pthread_barrier_init (&barrier, NULL, 3);

// start up two threads, thread1 and thread2

pthread_create (NULL, NULL, thread1, NULL);

pthread_create (NULL, NULL, thread2, NULL);

// at this point, thread1 and thread2 are running

// now wait for completion

time (&now);

printf ("main() waiting for barrier at %s", ctime (&now));

pthread_barrier_wait (&barrier);

// after this point, all three threads have completed.

time (&now);

printf ("barrier in main() done at %s", ctime (&now));

pthread_exit( NULL );

return (EXIT_SUCCESS);

}

The main thread created the barrier object and initialized it with a count of the total number of threads that must be synchronized to the barrier before the threads may carry on. In the example above, we used a count of 3: one for the main() thread, one for thread1(), and one for thread2().

Then we start thread1() and thread2(). To simplify this example, we have the threads sleep to cause a delay, as if computations were occurring. To synchronize, the main thread simply blocks itself on the barrier, knowing that the barrier will unblock only after the two worker threads have joined it as well.

In this release, the following barrier functions are included:

| Function | Description |

|---|---|

| pthread_barrierattr_getpshared() | Get the value of a barrier's process-shared attribute |

| pthread_barrierattr_destroy() | Destroy a barrier's attributes object |

| pthread_barrierattr_init() | Initialize a barrier's attributes object |

| pthread_barrierattr_setpshared() | Set the value of a barrier's process-shared attribute |

| pthread_barrier_destroy() | Destroy a barrier |

| pthread_barrier_init() | Initialize a barrier |

| pthread_barrier_wait() | Synchronize participating threads at the barrier |

Sleepon locks are very similar to condvars, with a few subtle differences. Like condvars, sleepon locks (pthread_sleepon_lock()) can be used to block until a condition becomes true (like a memory location changing value). But unlike condvars, which must be allocated for each condition to be checked, sleepon locks multiplex their functionality over a single mutex and dynamically allocated condvar, regardless of the number of conditions being checked. The maximum number of condvars ends up being equal to the maximum number of blocked threads. These locks are patterned after the sleepon locks commonly used within the UNIX kernel.

More formally known as “Multiple readers, single writer locks,” these locks are used when the access pattern for a data structure consists of many threads reading the data, and (at most) one thread writing the data. These locks are more expensive than mutexes, but can be useful for this data access pattern.

This lock works by allowing all the threads that request a read-access lock (pthread_rwlock_rdlock()) to succeed in their request. But when a thread wishing to write asks for the lock (pthread_rwlock_wrlock()), the request is denied until all the current reading threads release their reading locks (pthread_rwlock_unlock()).

Multiple writing threads can queue (in priority order) waiting for their chance to write the protected data structure, and all the blocked writer-threads will get to run before reading threads are allowed access again. The priorities of the reading threads are not considered.

There are also calls (pthread_rwlock_tryrdlock() and pthread_rwlock_trywrlock()) to allow a thread to test the attempt to achieve the requested lock, without blocking. These calls return with a successful lock or a status indicating that the lock couldn't be granted immediately.

Reader/writer locks aren't implemented directly within the kernel, but are instead built from the mutex and condvar services provided by the kernel.

Semaphores are another common form of synchronization that allows threads to “post” (sem_post()) and “wait” (sem_wait()) on a semaphore to control when threads wake or sleep. The post operation increments the semaphore; the wait operation decrements it.

If you wait on a semaphore that is positive, you will not block. Waiting on a nonpositive semaphore will block until some other thread executes a post. It is valid to post one or more times before a wait. This use will allow one or more threads to execute the wait without blocking.

A significant difference between semaphores and other synchronization primitives is that semaphores are “async safe” and can be manipulated by signal handlers. If the desired effect is to have a signal handler wake a thread, semaphores are the right choice.

|

Note that in general, mutexes are much faster than semaphores, which always require a kernel entry. Semaphores don't affect a thread's effective priority; if you need priority inheritance, use a mutex. For more information, see “Mutexes: mutual exclusion locks,” earlier in this chapter. |

Another useful property of semaphores is that they were defined to operate between processes. Although our mutexes work between processes, the POSIX thread standard considers this an optional capability and as such may not be portable across systems. For synchronization between threads in a single process, mutexes will be more efficient than semaphores.

As a useful variation, a named semaphore service is also available. It lets you use semaphores between processes on different machines connected by a network.

|

Note that named semaphores are slower than the unnamed variety. |

Since semaphores, like condition variables, can legally return a nonzero value because of a false wake-up, correct usage requires a loop:

while (sem_wait(&s) && (errno == EINTR)) { do_nothing(); }

do_critical_region(); /* Semaphore was decremented */

By selecting the POSIX FIFO scheduling algorithm, we can guarantee that no two threads of the same priority execute the critical section concurrently on a non-SMP system. The FIFO scheduling algorithm dictates that all FIFO-scheduled threads in the system at the same priority will run, when scheduled, until they voluntarily release the processor to another thread.

This “release” can also occur when the thread blocks as part of requesting the service of another process, or when a signal occurs. The critical region must therefore be carefully coded and documented so that later maintenance of the code doesn't violate this condition.

In addition, higher-priority threads in that (or any other) process could still preempt these FIFO-scheduled threads. So, all the threads that could “collide” within the critical section must be FIFO-scheduled at the same priority. Having enforced this condition, the threads can then casually access this shared memory without having to first make explicit synchronization calls.

|

This exclusive-access relationship doesn't apply in multiprocessor systems, since each CPU could run a thread simultaneously through the region that would otherwise be serially scheduled on a single-processor machine. |

Our Send/Receive/Reply message-passing IPC services (described later) implement an implicit synchronization by their blocking nature. These IPC services can, in many instances, render other synchronization services unnecessary. They are also the only synchronization and IPC primitives (other than named semaphores, which are built on top of messaging) that can be used across the network.

In some cases, you may want to perform a short operation (such as incrementing a variable) with the guarantee that the operation will perform atomically — i.e. the operation won't be preempted by another thread or ISR (Interrupt Service Routine).

Under QNX Neutrino, we provide atomic operations for:

These atomic operations are available by including the C header file <atomic.h>.

Although you can use these atomic operations just about anywhere, you'll find them particularly useful in these two cases:

Since an ISR can preempt a thread at any given point, the only way that the thread would be able to protect itself would be to disable interrupts. Since you should avoid disabling interrupts in a realtime system, we recommend that you use the atomic operations provided with QNX Neutrino.

On an SMP system, multiple threads can and do run concurrently. Again, we run into the same situation as with interrupts above — you should use the atomic operations where applicable to eliminate the need to disable and reenable interrupts.

The following table lists the various microkernel calls and the higher-level POSIX calls constructed from them:

| Microkernel call | POSIX call | Description |

|---|---|---|

| SyncTypeCreate() | pthread_mutex_init(), pthread_cond_init(), sem_init() | Create object for mutex, condvars, and semaphore. |

| SyncDestroy() | pthread_mutex_destroy(), pthread_cond_destroy(), sem_destroy() | Destroy synchronization object. |

| SyncCondvarWait() | pthread_cond_wait(), pthread_cond_timedwait() | Block on a condvar. |

| SyncCondvarSignal() | pthread_cond_broadcast(), pthread_cond_signal() | Wake up condvar-blocked threads. |

| SyncMutexLock() | pthread_mutex_lock(), pthread_mutex_trylock() | Lock a mutex. |

| SyncMutexUnlock() | pthread_mutex_unlock() | Unlock a mutex. |

| SyncSemPost() | sem_post() | Post a semaphore. |

| SyncSemWait() | sem_wait(), sem_trywait() | Wait on a semaphore. |

Clock services are used to maintain the time of day, which is in turn used by the kernel timer calls to implement interval timers.

|

Valid dates on a QNX Neutrino system range from January 1970 to at least 2038. The time_t data type is an unsigned 32-bit number, which extends this range for many applications through 2106. The kernel itself uses unsigned 64-bit numbers to count the nanoseconds since January 1970, and so can handle dates through 2554. If your system must operate past 2554 and there's no way for the system to be upgraded or modified in the field, you'll have to take special care with system dates (contact us for help with this). |

The ClockTime() kernel call allows you to get or set the system clock specified by an ID (CLOCK_REALTIME), which maintains the system time. Once set, the system time increments by some number of nanoseconds based on the resolution of the system clock. This resolution can be queried or changed using the ClockPeriod() call.

Within the system page, an in-memory data structure, there's a 64-bit field (nsec) that holds the number of nanoseconds since the system was booted. The nsec field is always monotonically increasing and is never affected by setting the current time of day via ClockTime() or ClockAdjust().

The ClockCycles() function returns the current value of a free-running 64-bit cycle counter. This is implemented on each processor as a high-performance mechanism for timing short intervals. For example, on Intel x86 processors, an opcode that reads the processor's time-stamp counter is used. On a Pentium processor, this counter increments on each clock cycle. A 100 MHz Pentium would have a cycle time of 1/100,000,000 seconds (10 nanoseconds). Other CPU architectures have similar instructions.

On processors that don't implement such an instruction in hardware (e.g. a 386), the kernel will emulate one. This will provide a lower time resolution than if the instruction is provided (838.095345 nanoseconds on an IBM PC-compatible system).

In all cases, the SYSPAGE_ENTRY(qtime)->cycles_per_sec field gives the number of ClockCycles() increments in one second.

The ClockPeriod() function allows a thread to set the system timer to some multiple of nanoseconds; the OS kernel will do the best it can to satisfy the precision of the request with the hardware available.

The interval selected is always rounded down to an integral of the precision of the underlying hardware timer. Of course, setting it to an extremely low value can result in a significant portion of CPU performance being consumed servicing timer interrupts.

| Microkernel call | POSIX call | Description |

|---|---|---|

| ClockTime() | clock_gettime(), clock_settime() | Get or set the time of day (using a 64-bit value in nanoseconds ranging from 1970 to 2554). |

| ClockAdjust() | N/A | Apply small time adjustments to synchronize clocks. |

| ClockCycles() | N/A | Read a 64-bit free-running high-precision counter. |

| ClockPeriod() | clock_getres() | Get or set the period of the clock. |

| ClockId() | clock_getcpuclockid(), pthread_getcpuclockid() | Return an integer that's passed to ClockTime() as a clockid_t. |

In order to facilitate applying time corrections without having the system experience abrupt “steps” in time (or even having time jump backwards), the ClockAdjust() call provides the option to specify an interval over which the time correction is to be applied. This has the effect of speeding or retarding time over a specified interval until the system has synchronized to the indicated current time. This service can be used to implement network-coordinated time averaging between multiple nodes on a network.

QNX Neutrino directly provides the full set of POSIX timer functionality. Since these timers are quick to create and manipulate, they're an inexpensive resource in the kernel.

The POSIX timer model is quite rich, providing the ability to have the timer expire on:

The cyclical mode is very significant, because the most common use of timers tends to be as a periodic source of events to “kick” a thread into life to do some processing and then go back to sleep until the next event. If the thread had to re-program the timer for every event, there would be the danger that time would slip unless the thread was programming an absolute date. Worse, if the thread doesn't get to run on the timer event because a higher-priority thread is running, the date next programmed into the timer could be one that has already elapsed!

The cyclical mode circumvents these problems by requiring that the thread set the timer once and then simply respond to the resulting periodic source of events.

Since timers are another source of events in the OS, they also make use of its event-delivery system. As a result, the application can request that any of the Neutrino-supported events be delivered to the application upon occurrence of a timeout.

An often-needed timeout service provided by the OS is the ability to specify the maximum time the application is prepared to wait for any given kernel call or request to complete. A problem with using generic OS timer services in a preemptive realtime OS is that in the interval between the specification of the timeout and the request for the service, a higher-priority process might have been scheduled to run and preempted long enough that the specified timeout will have expired before the service is even requested. The application will then end up requesting the service with an already lapsed timeout in effect (i.e. no timeout). This timing window can result in “hung” processes, inexplicable delays in data transmission protocols, and other problems.

alarm(...); … … ← Alarm fires here … blocking_call();

Our solution is a form of timeout request atomic to the service request itself. One approach might have been to provide an optional timeout parameter on every available service request, but this would overly complicate service requests with a passed parameter that would often go unused.

QNX Neutrino provides a TimerTimeout() kernel call that allows an application to specify a list of blocking states for which to start a specified timeout. Later, when the application makes a request of the kernel, the kernel will atomically enable the previously configured timeout if the application is about to block on one of the specified states.

Since the OS has a very small number of blocking states, this mechanism works very concisely. At the conclusion of either the service request or the timeout, the timer will be disabled and control will be given back to the application.

TimerTimeout(...); … … … blocking_call(); … ← Timer atomically armed within kernel

| Microkernel call | POSIX call | Description |

|---|---|---|

| TimerAlarm() | alarm() | Set a process alarm. |

| TimerCreate() | timer_create() | Create an interval timer. |

| TimerDestroy() | timer_delete() | Destroy an interval timer. |

| TimerInfo() | timer_gettime() | Get time remaining on an interval timer. |

| TimerInfo() | timer_getoverrun() | Get number of overruns on an interval timer. |

| TimerSettime() | timer_settime() | Start an interval timer. |

| TimerTimeout() | sleep(), nanosleep(), sigtimedwait(), pthread_cond_timedwait(), pthread_mutex_trylock() | Arm a kernel timeout for any blocking state. |

For more information, see the Clocks, Timers, and Getting a Kick Every So Often chapter of Getting Started with QNX Neutrino.

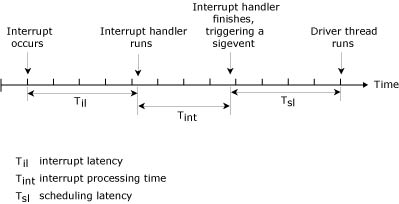

No matter how much we wish it were so, computers are not infinitely fast. In a realtime system, it's absolutely crucial that CPU cycles aren't unnecessarily spent. It's also crucial to minimize the time from the occurrence of an external event to the actual execution of code within the thread responsible for reacting to that event. This time is referred to as latency.

The two forms of latency that most concern us are interrupt latency and scheduling latency.

|

Latency times can vary significantly, depending on the speed of the processor and other factors. For more information, visit our website (www.qnx.com). |

Interrupt latency is the time from the assertion of a hardware interrupt until the first instruction of the device driver's interrupt handler is executed. The OS leaves interrupts fully enabled almost all the time, so that interrupt latency is typically insignificant. But certain critical sections of code do require that interrupts be temporarily disabled. The maximum such disable time usually defines the worst-case interrupt latency — in QNX Neutrino this is very small.

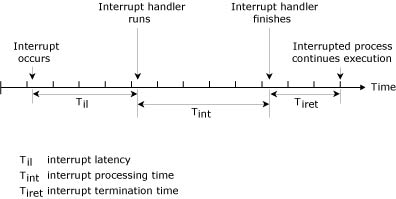

The following diagrams illustrate the case where a hardware interrupt is processed by an established interrupt handler. The interrupt handler either will simply return, or it will return and cause an event to be delivered.

Interrupt handler simply terminates.

The interrupt latency (Til) in the above diagram represents the minimum latency — that which occurs when interrupts were fully enabled at the time the interrupt occurred. Worst-case interrupt latency will be this time plus the longest time in which the OS, or the running system process, disables CPU interrupts.

In some cases, the low-level hardware interrupt handler must schedule a higher-level thread to run. In this scenario, the interrupt handler will return and indicate that an event is to be delivered. This introduces a second form of latency — scheduling latency — which must be accounted for.

Scheduling latency is the time between the last instruction of the user's interrupt handler and the execution of the first instruction of a driver thread. This usually means the time it takes to save the context of the currently executing thread and restore the context of the required driver thread. Although larger than interrupt latency, this time is also kept small in a QNX Neutrino system.

Interrupt handler terminates, returning an event.

It's important to note that most interrupts terminate without delivering an event. In a large number of cases, the interrupt handler can take care of all hardware-related issues. Delivering an event to wake up a higher-level driver thread occurs only when a significant event occurs. For example, the interrupt handler for a serial device driver would feed one byte of data to the hardware upon each received transmit interrupt, and would trigger the higher-level thread within (devc-ser*) only when the output buffer is nearly empty.

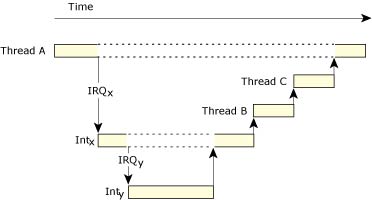

QNX Neutrino fully supports nested interrupts. The previous scenarios describe the simplest — and most common — situation where only one interrupt occurs. Worst-case timing considerations for unmasked interrupts must take into account the time for all interrupts currently being processed, because a higher priority, unmasked interrupt will preempt an existing interrupt.

In the following diagram, Thread A is running. Interrupt IRQx causes interrupt handler Intx to run, which is preempted by IRQy and its handler Inty. Inty returns an event causing Thread B to run; Intx returns an event causing Thread C to run.

Stacked interrupts.

The interrupt-handling API includes the following kernel calls:

| Function | Description |

|---|---|

| InterruptAttach() | Attach a local function to an interrupt vector. |

| InterruptAttachEvent() | Generate an event on an interrupt, which will ready a thread. No user interrupt handler runs. This is the preferred call. |

| InterruptDetach() | Detach from an interrupt using the ID returned by InterruptAttach() or InterruptAttachEvent(). |

| InterruptWait() | Wait for an interrupt. |

| InterruptEnable() | Enable hardware interrupts. |

| InterruptDisable() | Disable hardware interrupts. |

| InterruptMask() | Mask a hardware interrupt. |

| InterruptUnmask() | Unmask a hardware interrupt. |

| InterruptLock() | Guard a critical section of code between an interrupt handler and a thread. A spinlock is used to make this code SMP-safe. This function is a superset of InterruptDisable() and should be used in its place. |

| InterruptUnlock() | Remove an SMP-safe lock on a critical section of code. |

Using this API, a suitably privileged user-level thread can call InterruptAttach() or InterruptAttachEvent(), passing a hardware interrupt number and the address of a function in the thread's address space to be called when the interrupt occurs. QNX Neutrino allows multiple ISRs to be attached to each hardware interrupt number — unmasked interrupts can be serviced during the execution of running interrupt handlers.

|

|

The following code sample shows how to attach an ISR to the hardware timer interrupt on the PC (which the OS also uses for the system clock). Since the kernel's timer ISR is already dealing with clearing the source of the interrupt, this ISR can simply increment a counter variable in the thread's data space and return to the kernel:

#include <stdio.h>

#include <sys/neutrino.h>

#include <sys/syspage.h>

struct sigevent event;

volatile unsigned counter;

const struct sigevent *handler( void *area, int id ) {

// Wake up the thread every 100th interrupt

if ( ++counter == 100 ) {

counter = 0;

return( &event );

}

else

return( NULL );

}

int main() {

int i;

int id;

// Request I/O privileges

ThreadCtl( _NTO_TCTL_IO, 0 );

// Initialize event structure

event.sigev_notify = SIGEV_INTR;

// Attach ISR vector

id=InterruptAttach( SYSPAGE_ENTRY(qtime)->intr, &handler,

NULL, 0, 0 );

for( i = 0; i < 10; ++i ) {

// Wait for ISR to wake us up

InterruptWait( 0, NULL );

printf( "100 events\n" );

}

// Disconnect the ISR handler

InterruptDetach(id);

return 0;

}

With this approach, appropriately privileged user-level threads can dynamically attach (and detach) interrupt handlers to (and from) hardware interrupt vectors at run time. These threads can be debugged using regular source-level debug tools; the ISR itself can be debugged by calling it at the thread level and source-level stepping through it or by using the InterruptAttachEvent() call.

When the hardware interrupt occurs, the processor will enter the interrupt redirector in the microkernel. This code pushes the registers for the context of the currently running thread into the appropriate thread table entry and sets the processor context such that the ISR has access to the code and data that are part of the thread the ISR is contained within. This allows the ISR to use the buffers and code in the user-level thread to resolve the interrupt and, if higher-level work by the thread is required, to queue an event to the thread the ISR is part of, which can then work on the data the ISR has placed into thread-owned buffers.

Since it runs with the memory-mapping of the thread containing it, the ISR can directly manipulate devices mapped into the thread's address space, or directly perform I/O instructions. As a result, device drivers that manipulate hardware don't need to be linked into the kernel.

The interrupt redirector code in the microkernel will call each ISR attached to that hardware interrupt. If the value returned indicates that a process is to be passed an event of some sort, the kernel will queue the event. When the last ISR has been called for that vector, the kernel interrupt handler will finish manipulating the interrupt control hardware and then “return from interrupt.”

This interrupt return won't necessarily be into the context of the thread that was interrupted. If the queued event caused a higher-priority thread to become READY, the microkernel will then interrupt-return into the context of the now-READY thread instead.

This approach provides a well-bounded interval from the occurrence of the interrupt to the execution of the first instruction of the user-level ISR (measured as interrupt latency), and from the last instruction of the ISR to the first instruction of the thread readied by the ISR (measured as thread or process scheduling latency).

The worst-case interrupt latency is well-bounded, because the OS disables interrupts only for a couple opcodes in a few critical regions. Those intervals when interrupts are disabled have deterministic runtimes, because they're not data dependent.

The microkernel's interrupt redirector executes only a few instructions before calling the user's ISR. As a result, process preemption for hardware interrupts or kernel calls is equally quick and exercises essentially the same code path.

While the ISR is executing, it has full hardware access (since it's part of a privileged thread), but can't issue other kernel calls. The ISR is intended to respond to the hardware interrupt in as few microseconds as possible, do the minimum amount of work to satisfy the interrupt (read the byte from the UART, etc.), and if necessary, cause a thread to be scheduled at some user-specified priority to do further work.

Worst-case interrupt latency is directly computable for a given hardware priority from the kernel-imposed interrupt latency and the maximum ISR runtime for each interrupt higher in hardware priority than the ISR in question. Since hardware interrupt priorities can be reassigned, the most important interrupt in the system can be made the highest priority.

Note also that by using the InterruptAttachEvent() call, no user ISR is run. Instead, a user-specified event is generated on each and every interrupt; the event will typically cause a waiting thread to be scheduled to run and do the work. The interrupt is automatically masked when the event is generated and then explicitly unmasked by the thread that handles the device at the appropriate time.

|

Both InterruptMask() and InterruptUnmask() are counting functions. For example, if InterruptMask() is called ten times, then InterruptUnmask() must also be called ten times. |

Thus the priority of the work generated by hardware interrupts can be performed at OS-scheduled priorities rather than hardware-defined priorities. Since the interrupt source won't re-interrupt until serviced, the effect of interrupts on the runtime of critical code regions for hard-deadline scheduling can be controlled.

In addition to hardware interrupts, various “events” within the microkernel can also be “hooked” by user processes and threads. When one of these events occurs, the kernel can upcall into the indicated function in the user thread to perform some specific processing for this event. For example, whenever the idle thread in the system is called, a user thread can have the kernel upcall into the thread so that hardware-specific low-power modes can be readily implemented.

| Microkernel call | Description |

|---|---|

| InterruptHookIdle() | When the kernel has no active thread to schedule, it will run the idle thread, which can upcall to a user handler. This handler can perform hardware-specific power-management operations. |

| InterruptHookTrace() | This function attaches a pseudo interrupt handler that can receive trace events from the instrumented kernel. |

For more information about interrupts, see the Interrupts chapter of Getting Started with QNX Neutrino, and the Writing an Interrupt Handler chapter of the Neutrino Programmer's Guide.

![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |