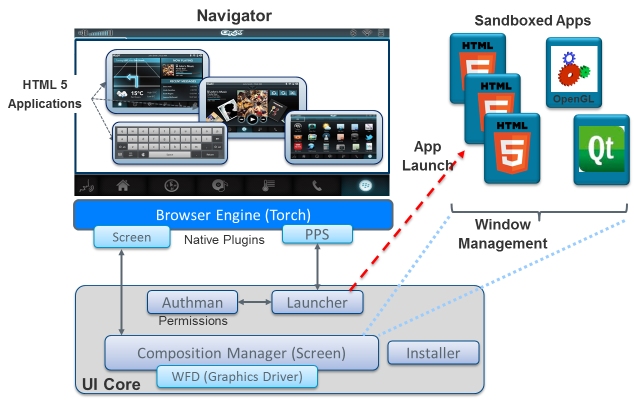

The Application management capability (also known as UI Core) provides control of the application lifecycle: starting, switching, activating, sleeping, and terminating. It also provides a means of restricting the services that applications can use to a preselected set. UI Core includes Screen, a component that allows multiple applications built with disparate technologies to share the same physical display real estate.

As shown in the figure below, the main components of UI Core are the application installer, the authorization manager (Authman), Screen, and the Launcher.

The application installer unpackages the application, validates its signature, and installs the application on the QNX CAR platform.

Launcher enables any application to launch any other application in any UI environment (subject to system permissions).

Authman controls which APIs and system services can be used by each application, enforcing the security model defined by the system developers. This authorization ensures that downloaded applications can't use interfaces they aren't authorized to use. See the HMI Developer's Guide for details.

Screen integrates multiple graphics and UI technologies into a single scene that can be rendered on the display. For example, Screen can combine HTML5, Elektrobit GUIDE, Crank Storyboard, Android, Qt, and native (OpenGL ES) rendered content. Developers can create a separate pane for the output of each rendering engine (such as HTML5, Qt, Video, or OpenGL ES). Each frame buffer can be transformed (scaling, translation, rotation, alpha blend, etc.) to build the final display. Whenever possible, Screen uses GPU-accelerated operations to build the display as optimally as possible, falling back on software only when the hardware can't satisfy a request.

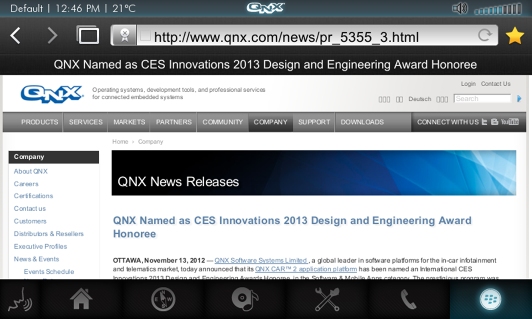

As an example, consider the screenshot of the Torch browser application below:

What appears to be a single homogeneous display is actually made up of the following:

- HTML5 browser chrome (title bar, forward/backward buttons, address bar)

- Natively rendered image

- HTML5 Navigator tabs

This approach has also been used by the TCS navigation engine (HTML5 borders and buttons with Open GL ES navigation).

Screen provides a lightweight composited windowing system. Unlike traditional windowing systems that arbitrate access to a single buffer associated with a display, a composited windowing system combines individual off-screen buffers holding window contents into one image, which is associated with a display.

Directing all rendering to off-screen buffers allows a more flexible use of window contents without involving the apps that are rendering this content. Windows can be moved around, zoomed in, zoomed out, rotated, or have transparency effects applied to them without requiring the app to redraw anything or to be aware that such effects are taking place.

Screen's display management technology consists of the following principal constructs:

- Context

- The context provides the setting for graphics operations within a windowing environment. The context-specific API components can identify and gain access to the objects that are required to draw windows, groups, displays, pixmaps and to set or change their properties and attributes. Displays and windows depend on the context, which is associated directly with events, groups, pixmaps, and windows.

- Events

- Events include window management, setting properties, keyboard events, and touch events.

- Windows

- As the fundamental drawing surface, windows can display different kinds of content for different purposes and in multiple types: application windows, child windows, embedded windows. Window groups can be used to associate a set of windows with a single app or a single window with the underlying system.

- Groups

- Groups comprise a set of related windows that are all treated as one object. Groups can be leveraged to apply sets of properties and to organize and manage all windows in the group. For example, windows belonging to multimedia applications may be grouped and the same set of display and interaction standards may be applied to them (e.g., apply transparency to components displayed from different technologies or slide these components on or off the screen as if they were locked together).

- Displays

- Displays are the physical devices that present the screen to the user (in this case, the in-vehicle head unit's LCD). Display-specific API components give access to display properties, modes, and vertical synchronization (vsync) operations.

- Buffers

- Buffers are areas of memory that can include both windows on the display and memory not yet visible. They let data move around quickly without taking up CPU cycles. Apps seldom use buffers directly (except perhaps to set the properties of a buffer). Device drivers and system services are typically responsible for creating buffer handles, setting the buffer properties, attaching the buffer to a window or a pixmap, and destroying the buffer handles.

- Pixmaps

- Pixmaps are the areas of memory that contain display data. Pixmaps are similar to bitmaps except that bitmaps always have a depth of one bit per pixel, whereas pixmaps can have multiple bits per pixel to store intensity or color component values. Pixmap surfaces can be drawn to directly, outside the viewable area, and copied to a display buffer at a later time. Note that a pixmap is a kind of buffer. A buffer has no knowledge of its contents, but a pixmap knows the structure of the data it contains.