Before we look at the heap, let's consider memory management in general.

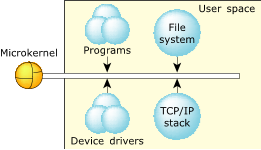

By design, the QNX Neutrino architecture helps ensure that faults, including memory errors, are confined to the program that caused them. Programs are less likely to cause a cascade of faults because processes are isolated from each other and from the microkernel. Even device drivers behave like regular debuggable processes:

Figure 1. The microkernel architecture.

Figure 1. The microkernel architecture.This robust architecture ensures that crashing one program has little or no effect on other programs throughout the system. If a program faults, you can be sure that the error is restricted to that process's operation.

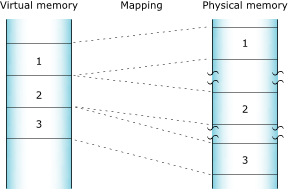

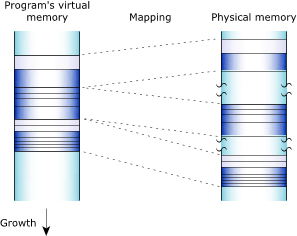

The full memory protection means that almost all the memory addresses your program encounters are virtual addresses. The process manager maps your program's virtual memory addresses to the actual physical memory; memory that is contiguous in your program may be transparently split up in your system's physical memory:

Figure 2. How the process manager allocates memory into pages.

Figure 2. How the process manager allocates memory into pages.The process manager allocates memory in small pages (typically 4 KB each). To determine the size for your system, use the sysconf() function.

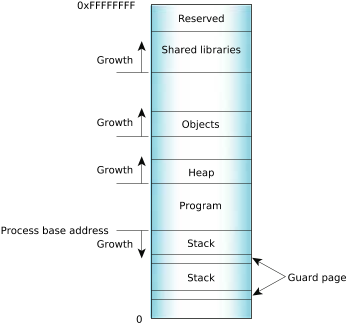

Your program's virtual address space includes the following categories:

- program

- stack

- shared library

- object

- heap

In general terms, the memory is laid out as follows:

Figure 3. Process memory layout on an x86.

Figure 3. Process memory layout on an x86.In reality, it's a little more complex. The various types of allocations, stacks, heap, shared objects, etc. have separate places where the memory manager starts looking for free virtual address space. The relative positions of the starting point for the search are as indicated in the diagram. Given those starting points, the memory manager starts looking up (if MAP_BELOW isn't set) or down (if MAP_BELOW is set) in the virtual address space of the process, looking for a free region that's big enough. This tends to make allocations group as the diagram shows, but a shared memory allocation, for example, can be located anywhere in the process address space.

QNX Neutrino 6.5 or later supports address space layout randomization (ASLR), which randomizes the stack start address and code locations in executables and libraries, and heap cookies. With ASLR, the memory manager starts at the appropriate virtual address for the allocation type and searches up or down as appropriate. Once it's found an open spot, it randomly adjusts the address up or down from what it would have used without ASLR.

Use the -mr option for procnto to use ASLR, or -m~r to not use it (the default). A child process normally inherits its parent's ASLR setting, but in QNX Neutrino 6.6 or later, you can change it by using the POSIX_SPAWN_ASLR_INVERT extended flag for posix_spawn() or the SPAWN_ASLR_INVERT flag for spawn() to toggle the setting of ASLR when you create a process from a program.

To determine whether or not your process is using ASLR, use the DCMD_PROC_INFO devctl() command and test for the _NTO_PF_ASLR bit in the flags member of the procfs_info structure.

Program memory

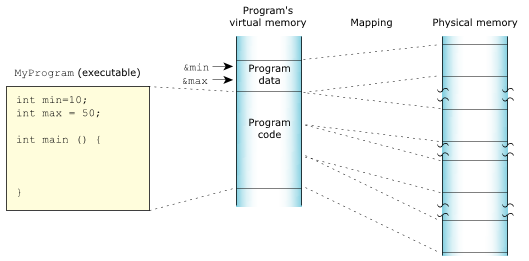

Program memory holds the executable contents of your program. The code section contains the read-only execution instructions (i.e., your actual compiled code); the data section contains all the values of the global and static variables used during your program's lifetime:

Figure 4. The program memory.

Figure 4. The program memory.Stack memory

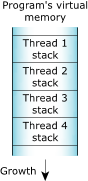

Stack memory holds the local variables and parameters your program's functions use. Each process in QNX Neutrino contains at least the main thread; each of the process's threads has an associated stack. When the program creates a new thread, the program can either allocate the stack and pass it into the thread-creation call, or let the system allocate a default stack size and address.

If the system allocates the stack, the memory is laid out like this:

Figure 5. The stack memory.

Figure 5. The stack memory.When the process manager creates a thread, it reserves the full stack in virtual memory, but not in physical memory. Instead, the process manager requests additional blocks of physical memory only when your program actually needs more stack memory. As one function calls another, the state of the calling function is pushed onto the stack. When the function returns, the local variables and parameters are popped off the stack.

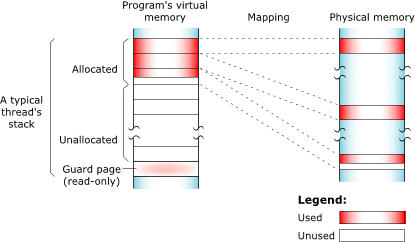

The used portion of the stack holds your thread's state information and takes up physical memory. The unused portion of the stack is initially allocated in virtual address space, but not physical memory:

Figure 6. Stack memory: virtual and physical.

Figure 6. Stack memory: virtual and physical.At the end of each virtual stack is a guard page that the microkernel uses to detect stack overflows. If your program writes to an address within the guard page, the microkernel detects the error and sends the process a SIGSEGV signal. There's no physical memory associated with the guard page.

As with other types of memory, the stack memory appears to be contiguous in virtual process memory, but isn't necessarily so in physical memory.

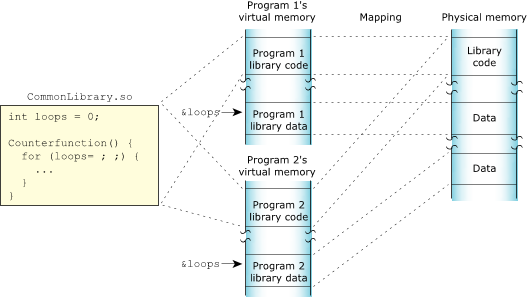

Shared-library memory

Shared-library memory stores the libraries you require for your process. Like program memory, library memory consists of both code and data sections. In the case of shared libraries, all the processes map to the same physical location for the code section and to unique locations for the data section:

Figure 7. The shared library memory.

Figure 7. The shared library memory.Object memory

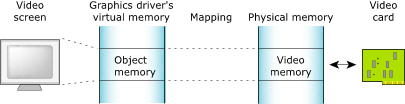

Object memory represents the areas that map into a program's virtual memory space, but this memory may be associated with a physical device. For example, the graphics driver may map the video card's memory to an area of the program's address space:

Figure 8. The object memory.

Figure 8. The object memory.Heap memory

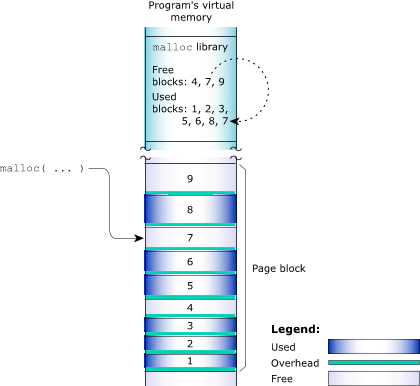

Heap memory represents the dynamic memory used by programs at runtime. Typically, processes allocate this memory using the malloc(), realloc(), and free() functions. These calls ultimately rely on the mmap() function to reserve memory that the library distributes.

The process manager usually allocates memory in 4 KB blocks, but allocations are typically much smaller. Since it would be wasteful to use 4 KB of physical memory when your program wants only 17 bytes, the library manages the heap. The library dispenses the paged memory in smaller chunks and keeps track of the allocated and unused portions of the page:

Figure 9. The allocator manages the blocks of memory.

Figure 9. The allocator manages the blocks of memory.Each allocation uses a small amount of fixed overhead to store internal data structures. Since there's a fixed overhead with respect to block size, the ratio of allocator overhead to data payload is larger for smaller allocation requests.

When your program uses malloc() to request a block of memory, the library returns the address of an appropriately sized block. For efficiency, the library includes two allocators:

- a small-block allocator that maintains pools of blocks in various sizes

- a large-block allocator that handles requests for blocks that are larger than the small-block allocator can provide

For example, the library may return a 20-byte block to fulfill a request for 17 bytes, a 1088-byte block for a 1088-byte request, and so on.

When the library receives an allocation request that it can't meet with its existing heap, it requests additional physical memory from the process manager. These allocations are done in chunks called arenas. By default, the arena allocations are performed in 32 KB chunks. The arena size must be a multiple of 4 KB and must currently be less than 256 KB. If your program requests a block that's larger than an arena, the allocator gets a block whose size is a multiple of the arena size from the process manager, gives your program a block of the requested size, and puts any remaining memory on a free list.

When memory is freed, the library merges adjacent free blocks within arenas and may, when appropriate, release an arena back to the system.

Figure 10. A process's heap memory.

Figure 10. A process's heap memory.