![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |

![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |

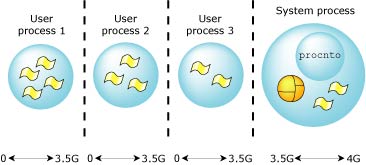

In QNX Neutrino, the microkernel is paired with the Process Manager in a single module (procnto). This module is required for all runtime systems.

The process manager is capable of creating multiple POSIX processes (each of which may contain multiple POSIX threads). Its main areas of responsibility include:

User processes can access microkernel functions directly via kernel calls and process manager functions by sending messages to procnto. Note that a user process sends a message by invoking the MsgSend*() kernel call.

It's important to note that threads executing within procnto invoke the microkernel in exactly the same way as threads in other processes. The fact that the process manager code and the microkernel share the same process address space doesn't imply a “special” or “private” interface. All threads in the system share the same consistent kernel interface and all perform a privilege switch when invoking the microkernel.

The first responsibility of procnto is to dynamically create new processes. These processes will then depend on procnto's other responsibilities of memory management and pathname management.

Process management consists of both process creation and destruction as well as the management of process attributes such as process IDs, process groups, user IDs, etc.

The process primitives include:

The posix_spawn() function creates a child process by directly specifying an executable to load. To those familiar with UNIX systems, the call is modeled after a fork() followed by an exec*(). However, it operates much more efficiently in that there's no need to duplicate address spaces as in a fork(), only to destroy and replace it when the exec*() is called.

In a UNIX system, one of the main advantages of using the fork()-then-exec*() method of creating a child process is the flexibility in changing the default environment inherited by the new child process. This is done in the forked child just before the exec*(). For example, the following simple shell command would close and reopen the standard output before exec*()'ing:

ls >file

You can do the same with posix_spawn(); it gives you control over the following classes of environment inheritance, which are often adjusted when creating a new child process:

There's also a companion function, posix_spawnp(), that doesn't require the absolute path to the program to spawn, but instead searches for the executable using the caller's PATH.

Using the posix_spawn() functions is the preferred way to create a new child process.

The QNX Neutrino spawn() function is similar to posix_spawn(). The spawn() function gives you control over the following:

The basic forms of the spawn() function are:

There's also a set of convenience functions that are built on top of spawn() and spawnp() as follows:

When a process is spawn()'ed, the child process inherits the following attributes of its parent:

The child process has several differences from the parent process:

If the child process is spawned on a remote node, the process group ID and the session membership aren't set; the child process is put into a new session and a new process group.

The child process can access the parent process's environment by using the environ global variable (found in <unistd.h>).

For more information, see the spawn() function in the QNX Neutrino Library Reference.

The fork() function creates a new child process by sharing the same code as the calling process and duplicating the calling process's data to give the child process an exact copy. Most process resources are inherited. The following lists some resources that are explicitly not inherited:

The fork() function is typically used for one of two reasons:

When creating a new thread, common data is placed in an explicitly created shared memory region. Prior to the POSIX thread standard, this was the only way to accomplish this. With POSIX threads, this use of fork() is better accomplished by creating threads within a single process using pthread_create().

When creating a new process running a different program, the call to fork() is soon followed by a call to one of the exec*() functions. This too is better accomplished by a single call to the posix_spawn() function or the QNX Neutrino spawn() function, which combine both operations with far greater efficiency.

Since QNX Neutrino provides better POSIX solutions than using fork(), its use is probably best suited for porting existing code and for writing portable code that must run on a UNIX system that doesn't support the POSIX pthread_create() or posix_spawn() API.

|

Note that fork() should be called from a process containing only a single thread. |

The vfork() function (which should also be called only from a single-threaded process) is useful when the purpose of fork() would have been to create a new system context for a call to one of the exec*() functions. The vfork() function differs from fork() in that the child doesn't get a copy of the calling process's data. Instead, it borrows the calling process's memory and thread of control until a call to one of the exec*() functions is made. The calling process is suspended while the child is using its resources.

The vfork() child can't return from the procedure that called vfork(), since the eventual return from the parent vfork() would then return to a stack frame that no longer existed.

The exec*() family of functions replaces the current process with a new process, loaded from an executable file. Since the calling process is replaced, there can be no successful return.

The following exec*() functions are defined:

The exec*() functions usually follow a fork() or vfork() in order to load a new child process. This is better achieved by using the posix_spawn() call.

Processes loaded from a filesystem using the exec*(), posix_spawn() or spawn() calls are in ELF format. If the filesystem is on a block-oriented device, the code and data are loaded into main memory. By default, the memory pages containing the binaries are demand-loaded, but you can use the procnto -m option to change this; for more information, see “Locking memory,” later in this chapter.

If the filesystem is memory mapped (e.g., ROM/flash image), the code needn't be loaded into RAM, but may be executed in place. This approach makes all RAM available for data and stack, leaving the code in ROM or flash. In all cases, if the same process is loaded more than once, its code will be shared.

While some realtime kernels or executives provide support for memory protection in the development environment, few provide protected memory support for the runtime configuration, citing penalties in memory and performance as reasons. But with memory protection becoming common on many embedded processors, the benefits of memory protection far outweigh the very small penalties in performance for enabling it.

The key advantage gained by adding memory protection to embedded applications, especially for mission-critical systems, is improved robustness.

With memory protection, if one of the processes executing in a multitasking environment attempts to access memory that hasn't been explicitly declared or allocated for the type of access attempted, the MMU hardware can notify the OS, which can then abort the thread (at the failing/offending instruction).

This “protects” process address spaces from each other, preventing coding errors in a thread in one process from “damaging” memory used by threads in other processes or even in the OS. This protection is useful both for development and for the installed runtime system, because it makes postmortem analysis possible.

During development, common coding errors (e.g., stray pointers and indexing beyond array bounds) can result in one process/thread accidentally overwriting the data space of another process. If the overwriting touches memory that isn't referenced again until much later, you can spend hours of debugging — often using in-circuit emulators and logic analyzers — in an attempt to find the “guilty party.”

With an MMU enabled, the OS can abort the process the instant the memory-access violation occurs, providing immediate feedback to the programmer instead of mysteriously crashing the system some time later. The OS can then provide the location of the errant instruction in the failed process, or position a symbolic debugger directly on this instruction.

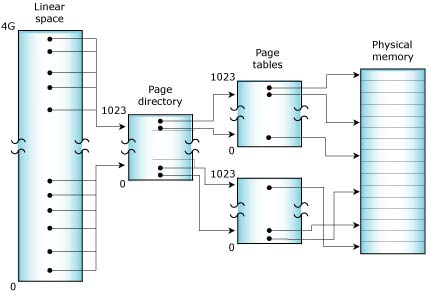

A typical MMU operates by dividing physical memory into a number of 4-KB pages. The hardware within the processor then uses a set of page tables stored in system memory that define the mapping of virtual addresses (i.e., the memory addresses used within the application program) to the addresses emitted by the CPU to access physical memory.

While the thread executes, the page tables managed by the OS control how the memory addresses that the thread is using are “mapped” onto the physical memory attached to the processor.

Virtual address mapping (on an x86).

For a large address space with many processes and threads, the number of page-table entries needed to describe these mappings can be significant — more than can be stored within the processor. To maintain performance, the processor caches frequently used portions of the external page tables within a TLB (translation look-aside buffer).

The servicing of “misses” on the TLB cache is part of the overhead imposed by enabling the MMU. Our OS uses various clever page-table arrangements to minimize this overhead.

Associated with these page tables are bits that define the attributes of each page of memory. Pages can be marked as read-only, read-write, etc. Typically, the memory of an executing process would be described with read-only pages for code, and read-write pages for the data and stack.

When the OS performs a context switch (i.e., suspends the execution of one thread and resumes another), it will manipulate the MMU to use a potentially different set of page tables for the newly resumed thread. If the OS is switching between threads within a single process, no MMU manipulations are necessary.

When the new thread resumes execution, any addresses generated as the thread runs are mapped to physical memory through the assigned page tables. If the thread tries to use an address not mapped to it, or it tries to use an address in a way that violates the defined attributes (e.g., writing to a read-only page), the CPU will receive a “fault” (similar to a divide-by-zero error), typically implemented as a special type of interrupt.

By examining the instruction pointer pushed on the stack by the interrupt, the OS can determine the address of the instruction that caused the memory-access fault within the thread/process and can act accordingly.

While memory protection is useful during development, it can also provide greater reliability for embedded systems installed in the field. Many embedded systems already employ a hardware “watchdog timer” to detect if the software or hardware has “lost its mind,” but this approach lacks the finesse of an MMU-assisted watchdog.

Hardware watchdog timers are usually implemented as a retriggerable monostable timer attached to the processor reset line. If the system software doesn't strobe the hardware timer regularly, the timer will expire and force a processor reset. Typically, some component of the system software will check for system integrity and strobe the timer hardware to indicate the system is “sane.”

Although this approach enables recovery from a lockup related to a software or hardware glitch, it results in a complete system restart and perhaps significant “downtime” while this restart occurs.

When an intermittent software error occurs in a memory-protected system, the OS can catch the event and pass control to a user-written thread instead of the memory dump facilities. This thread can make an intelligent decision about how best to recover from the failure, instead of forcing a full reset as the hardware watchdog timer would do. The software watchdog could:

The important distinction here is that we retain intelligent, programmed control of the embedded system, even though various processes and threads within the control software may have failed for various reasons. A hardware watchdog timer is still of use to recover from hardware “latch-ups,” but for software failures we now have much better control.

While performing some variation of these recovery strategies, the system can also collect information about the nature of the software failure. For example, if the embedded system contains or has access to some mass storage (flash memory, hard drive, a network link to another computer with disk storage), the software watchdog can generate a chronologically archived sequence of dump files. These dump files could then be used for postmortem diagnostics.

Embedded control systems often employ these “partial restart” approaches to surviving intermittent software failures without the operators experiencing any system “downtime” or even being aware of these quick-recovery software failures. Since the dump files are available, the developers of the software can detect and correct software problems without having to deal with the emergencies that result when critical systems fail at inconvenient times. If we compare this to the hardware watchdog timer approach and the prolonged interruptions in service that result, it's obvious what our preference is!

Postmortem dump-file analysis is especially important for mission-critical embedded systems. Whenever a critical system fails in the field, significant effort should be made to identify the cause of the failure so that a “fix” can be engineered and applied to other systems before they experience similar failures.

Dump files give programmers the information they need to fix the problem — without them, programmers may have little more to go on than a customer's cryptic complaint that “the system crashed.”

By dividing embedded software into a team of cooperating, memory-protected processes (containing threads), we can readily treat these processes as “components” to be used again in new projects. Because of the explicitly defined (and hardware-enforced) interfaces, these processes can be integrated into applications with confidence that they won't disrupt the system's overall reliability. In addition, because the exact binary image (not just the source code) of the process is being reused, we can better control changes and instabilities that might have resulted from recompilation of source code, relinking, new versions of development tools, header files, library routines, etc.

Since the binary image of the process is reused (with its behavior perhaps modified by command-line options), the confidence we have in that binary module from acquired experience in the field more easily carries over to new applications than if the binary image of the process were changed.

As much as we strive to produce error-free code for the systems we deploy, the reality of software-intensive embedded systems is that programming errors will end up in released products. Rather than pretend these bugs don't exist (until the customer calls to report them), we should adopt a “mission-critical” mindset. Systems should be designed to be tolerant of, and able to recover from, software faults. Making use of the memory protection delivered by integrated MMUs in the embedded systems we build is a good step in that direction.

Our full-protection model relocates all code in the image into a new virtual space, enabling the MMU hardware and setting up the initial page-table mappings. This allows procnto to start in a correct, MMU-enabled environment. The process manager will then take over this environment, changing the mapping tables as needed by the processes it starts.

In the full-protection model, each process is given its own private virtual memory, which spans to 2 or 3.5 gigabytes (depending on the CPU). This is accomplished by using the CPU's MMU. The performance cost for a process switch and a message pass will increase due to the increased complexity of obtaining addressability between two completely private address spaces.

|

Private memory space starts at 0 on x86, SH-4, ARM, and MIPS processors, but not on the PowerPC, where the space from 0 to 1 GB is reserved for system processes. |

Full protection VM (on an x86).

The memory cost per process may increase by 4 KB to 8 KB for each process's page tables. Note that this memory model supports the POSIX fork() call.

The virtual memory manager may use variable page sizes if the processor supports them and there's a benefit to doing so. Using a variable page size can improve performance because:

If you want to disable the variable page size feature, specify the -m~v option to procnto in your buildfile. The -mv option enables it.

QNX Neutrino supports POSIX memory locking, so that a process can avoid the latency of fetching a page of memory, by locking the memory so that the page is memory-resident (i.e., it remains in physical memory).

The levels of locking are as follows:

Failure to initialize the page results in the receipt of a SIGBUS signal.

To lock and unlock a portion of a thread's memory, call mlock() and munlock(); to lock and unlock all of a thread's memory, call mlockall() and munlockall(). The memory remains locked until the process unlocks it, exits, or calls an exec*() function. If the process calls fork(), a posix_spawn*() function, or a spawn*() function, the memory locks are released in the child process.

More than one process can lock the same (or overlapping) region; the memory remains locked until all the processes have unlocked it. Memory locks don't stack; if a process locks the same region more than once, unlocking it once undoes all of the process's locks on that region.

To lock all memory for all applications, specify the -ml option for procnto. Thus all pages are at least initialized (if still set only to PROT_READ).

To superlock memory, obtain I/O privileges by calling ThreadCtl(), specifying the _NTO_TCTL_IO flag:

ThreadCtl( _NTO_TCTL_IO, 0 );

To superlock all memory for all applications, specify the -mL option for procnto.

For MAP_LAZY mappings, memory isn't allocated or mapped until the memory is first referenced for any of the above types. Once it's been referenced, it obeys the above rules — it's a programmer error to touch a MAP_LAZY area in a critical region (where interrupts are disabled or in an ISR) that hasn't already been referenced.

Most computer users are familiar with the concept of disk fragmentation, whereby over time, the free space on the disk is split into small blocks scattered among the in-use blocks. A similar problem occurs as the OS allocates and frees pieces of physical memory; as time passes, the system's physical memory can become fragmented. Eventually, even though there might be a significant amount of memory free in total, it's fragmented so that a request for a large piece of contiguous memory will fail.

Contiguous memory is often required for device drivers if the device uses DMA. The normal workaround is to ensure that all device drivers are initialized early (before memory is fragmented) and that they hold onto their memory. This is a harsh restriction, particularly for embedded systems that might want to use different drivers depending on the actions of the user; starting all possible device drivers simultaneously may not be feasible.

The algorithms that QNX Neutrino uses to allocate physical memory help to significantly reduce the amount of fragmentation that occurs. However, no matter how smart these algorithms might be, specific application behavior can result in fragmented free memory. Consider a completely degenerate application that routinely allocates 8 KB of memory and then frees half of it. If such an application runs long enough, it will reach a point where half of the system memory is free, but no free block is larger than 4 KB.

Thus, no matter how good our allocation routines are at avoiding fragmentation, in order to satisfy a request for contiguous memory, it may be necessary to run some form of defragmentation algorithm.

The term “fragmentation” can apply to both in-use memory and free memory:

In disk-based filesystems, fragmentation of in-use blocks is most important, as it impacts the read and write performance of the device. Fragmentation of free blocks is important only in that it leads to fragmentation of in-use blocks as new blocks are allocated. In general, users of disk-based systems don't care about allocating contiguous blocks, except as it impacts performance.

For the QNX Neutrino memory system, both forms of fragmentation are important but for different reasons:

To defragment free memory, the memory manager swaps memory that's in use for memory that's free, in such a way that the free memory blocks coalesce into larger blocks that are sufficient to satisfy a request for contiguous memory.

When an application allocates memory, it's provided by the operating system in quantums, 4-KB blocks of memory that exist on 4-KB boundaries. The operating system programs the MMU so that the application can reference the physical block of memory through a virtual address; during operation, the MMU translates a virtual address into a physical address.

For example, a request for 16 KB of memory is satisfied by allocating four 4-KB quantums. The operating system sets aside the four physical blocks for the application and configures the MMU to ensure that the application can reference them through a 16-KB contiguous virtual address. However, these blocks might not be physically contiguous; the operating system can arrange the MMU configuration (the virtual to physical mapping) so that non-contiguous physical addresses are accessed through contiguous virtual addresses.

The task of defragmentation consists of changing existing memory allocations and mappings to use different underlying physical pages. By swapping around the underlying physical quantums, the OS can consolidate the fragmented free blocks into contiguous runs. However, it's careful to avoid moving certain types of memory where the virtual-to-physical mapping can't safely be changed:

There are other times when memory can't be moved; see “Automatically marking memory as unmovable,” below.

Defragmentation is done, if necessary, when an application allocates a piece of contiguous memory. The application does this through the mmap() call, providing MAP_PHYS | MAP_ANON flags. If it isn't possible to satisfy a MAP_PHYS allocation with contiguous memory, what happens depends on whether defragmentation is disabled or enabled:

|

During the memory defragmentation, the thread calling mmap()

is blocked.

Compaction can take a significant amount of time (particularly on systems with

large amounts of memory), but other system activities are mostly

unaffected.

Since other system tasks are running simultaneously, the defragmentation algorithm takes into account that memory mappings can change while the algorithm is running. |

Defragmenting is enabled by default. You can disable it by using the procnto command-line option -m~d, and enable it by using the -md option.

Memory that's allocated to be physically contiguous is marked as “unmovable” for the compaction algorithm. This is done because specifying that a memory allocation must be contiguous implies that it will be used in a situation where its physical address is important, and moving such memory could break an application that depends on this characteristic.

In addition, memory that has a mem_offset() performed on it — to report the physical address that backs a virtual address — might need to be protected from being moved by the compaction algorithm. However, the OS doesn't want to always mark such memory as unmovable, because programs can call mem_offset() out of curiosity (as, for example, the IDE's memory profiler does). We don't want to lock down memory from being moved in all such cases.

On the other hand, if an application depends on the results of the mem_offset() call, and the OS later moves the memory allocation, that might break the application. Such an application should lock its memory (with the mlock() call), but since QNX Neutrino hasn't always moved memory in the past, it can't assume that all applications behave correctly.

To this end, procnto supports a -ma command-line option. If you specify this option, any calls to mem_offset() automatically mark the memory block as unmovable. Note that memory that was allocated contiguously or has been locked through mlock() is already unmovable, so this option is irrelevant. It's also relevant only if the memory defragmentation feature is enabled.

This option is disabled by default. If you find an application that behaves poorly, you can enable automatic marking as a workaround until the application is corrected.

I/O resources are not built into the microkernel, but are instead provided by resource manager processes that may be started dynamically at runtime. The procnto manager allows resource managers, through a standard API, to adopt a subset of the pathname space as a “domain of authority” to administer. As other resource managers adopt their respective domains of authority, procnto becomes responsible for maintaining a pathname tree to track the processes that own portions of the pathname space. An adopted pathname is sometimes referred to as a “prefix” because it prefixes any pathnames that lie beneath it; prefixes can be arranged in a hierarchy called a prefix tree. The adopted pathname is also called a mountpoint, because that's where a server mounts into the pathname.

This approach to pathname space management is what allows QNX Neutrino to preserve the POSIX semantics for device and file access, while making the presence of those services optional for small embedded systems.

At startup, procnto populates the pathname space with the following pathname prefixes:

| Prefix | Description |

|---|---|

| / | Root of the filesystem. |

| /proc/boot/ | Some of the files from the boot image presented as a flat filesystem. |

| /proc/pid | The running processes, each represented by its process ID (PID). For more information, see “Controlling processes via the /proc filesystem” in the Processes chapter of the QNX Neutrino Programmer's Guide. |

| /dev/zero | A device that always returns zero. Used for allocating zero-filled pages using the mmap() function. |

| /dev/mem | A device that represents all physical memory. |

When a process opens a file, the POSIX-compliant open() library routine first sends the pathname to procnto, where the pathname is compared against the prefix tree to determine which resource managers should be sent the open() message.

The prefix tree may contain identical or partially overlapping regions of authority — multiple servers can register the same prefix. If the regions are identical, the order of resolution can be specified (see “Ordering mountpoints,” below). If the regions are overlapping, the responses from the path manager are ordered with the longest prefixes first; for prefixes of equal length, the same specified order of resolution applies as for identical regions.

For example, suppose we have these prefixes registered:

| Prefix | Description |

|---|---|

| / | QNX 4 disk-based filesystem (fs-qnx4.so) |

| /dev/ser1 | Serial device manager (devc-ser*) |

| /dev/ser2 | Serial device manager (devc-ser*) |

| /dev/hd0 | Raw disk volume (devb-eide) |

The filesystem manager has registered a prefix for a mounted QNX 4 filesystem (i.e., /). The block device driver has registered a prefix for a block special file that represents an entire physical hard drive (i.e., /dev/hd0). The serial device manager has registered two prefixes for the two PC serial ports.

The following table illustrates the longest-match rule for pathname resolution:

| This pathname: | matches: | and resolves to: |

|---|---|---|

| /dev/ser1 | /dev/ser1 | devc-ser* |

| /dev/ser2 | /dev/ser2 | devc-ser* |

| /dev/ser | / | fs-qnx4.so |

| /dev/hd0 | /dev/hd0 | devb-eide.so |

| /usr/jhsmith/test | / | fs-qnx4.so |

Generally the order of resolving a filename is the order in which you mounted the filesystems at the same mountpoint (i.e., new mounts go on top of or in front of any existing ones). You can specify the order of resolution when you mount the filesystem. For example, you can use:

You can also use the -o option to mount with these keywords:

If you specify the appropriate before option, the filesystem floats in front of any other filesystems mounted at the same mountpoint, except those that you later mount with before. If you specify after, the filesystem goes behind any any other filesystems mounted at the same mountpoint, except those that are already mounted with after. So, the search order for these filesystems is:

with each list searched in order of mount requests. The first server to claim the name gets it. You would typically use after to have a filesystem wait at the back and pick up things the no one else is handling, and before to make sure a filesystems looks first at filenames.

Consider an example involving three servers:

At this point, the process manager's internal mount table would look like this:

| Mountpoint | Server |

|---|---|

| / | Server A (QNX 4 filesystem) |

| /bin | Server B (flash filesystem) |

| /dev/random | Server C (device) |

Of course, each “Server” name is actually an abbreviation for the nd,pid,chid for that particular server channel.

Now suppose a client wants to send a message to Server C. The client's code might look like this:

int fd;

fd = open("/dev/random", ...);

read(fd, ...);

close(fd);

In this case, the C library will ask the process manager for the servers that could potentially handle the path /dev/random. The process manager would return a list of servers:

From this information, the library will then contact each server in turn and send it an open message, including the component of the path that the server should validate:

As soon as one server positively acknowledges the request, the library won't contact the remaining servers. This means Server A is contacted only if Server C denies the request.

This process is fairly straightforward with single device entries, where the first server is generally the server that will handle the request. Where it becomes interesting is in the case of unioned filesystem mountpoints.

Let's assume we have two servers set up as before:

Note that each server has a /bin directory, but with different contents.

Once both servers are mounted, you would see the following due to the unioning of the mountpoints:

What's happening here is that the resolution for the path /bin takes place as before, but rather than limit the return to just one connection ID, all the servers are contacted and asked about their handling for the path:

DIR *dirp;

dirp = opendir("/bin", ...);

closedir(dirp);

which results in:

The result now is that we have a collection of file descriptors to servers who handle the path /bin (in this case two servers); the actual directory name entries are read in turn when a readdir() is called. If any of the names in the directory are accessed with a regular open, then the normal resolution procedure takes place and only one server is accessed.

This overlaying of mountpoints is a very handy feature when doing field updates, servicing, etc. It also makes for a more unified system, where pathnames result in connections to servers regardless of what services they're providing, thus resulting in a more unified API.

We've discussed prefixes that map to a resource manager. A second form of prefix, known as a symbolic prefix, is a simple string substitution for a matched prefix.

You create symbolic prefixes using the POSIX ln (link) command. This command is typically used to create hard or symbolic links on a filesystem by using the -s option. If you also specify the -P option, then a symbolic link is created in the in-memory prefix space of procnto.

| Command | Description |

|---|---|

| ln -s existing_file symbolic_link | Create a filesystem symbolic link. |

| ln -Ps existing_file symbolic_link | Create a prefix tree symbolic link. |

Note that a prefix tree symbolic link will always take precedence over a filesystem symbolic link.

For example, assume you're running on a machine that doesn't have a local filesystem. However, there's a filesystem on another node (say neutron) that you wish to access as “/bin”. You accomplish this using the following symbolic prefix:

ln -Ps /net/neutron/bin /bin

This will cause /bin to be mapped into /net/neutron/bin. For example, /bin/ls will be replaced with the following:

/net/neutron/bin/ls

This new pathname will again be applied against the prefix tree, but this time the prefix matched will be /net, which will point to lsm-qnet. The lsm-qnet resource manager will then resolve the neutron component, and redirect further resolution requests to the node called neutron. On node neutron, the rest of the pathname (i.e., /bin/ls) will be resolved against the prefix space on that node. This will resolve to the filesystem manager on node neutron, where the open() request will be directed. With just a few characters, this symbolic prefix has allowed us to access a remote filesystem as though it were local.

It's not necessary to run a local filesystem process to perform the redirection. A diskless workstation's prefix tree might look something like this:

With this prefix tree, local devices such as /dev/ser1 or /dev/console will be routed to the local character device manager, while requests for other pathnames will be routed to the remote filesystem.

You can also use symbolic prefixes to create special device names. For example, if your modem was on /dev/ser1, you could create a symbolic prefix of /dev/modem as follows:

ln -Ps /dev/ser1 /dev/modem

Any request to open /dev/modem will be replaced with /dev/ser1. This mapping would allow the modem to be changed to a different serial port simply by changing the symbolic prefix and without affecting any applications.

Pathnames need not start with slash. In such cases, the path is considered relative to the current working directory. The OS maintains the current working directory as a character string. Relative pathnames are always converted to full network pathnames by prepending the current working directory string to the relative pathname.

Note that different behaviors result when your current working directory starts with a slash versus starting with a network root.

If the current working directory begins with a network root in the form /net/node_name, it's said to be specific and locked to the pathname space of the specified node. If you don't specify a network root, the default one is prepended.

For example, this command:

cd /net/percy

is an example of the first (specific) form, and would lock future relative pathname evaluation to be on node percy, no matter what your default network root happens to be. Subsequently entering cd dev would put you in /net/percy/dev.

On the other hand, this command:

cd /

would be of the second form, where the default network root would affect the relative pathname resolution. For example, if your default network root were /net/florence, then entering cd dev would put you in /net/florence/dev. Since the current working directory doesn't start with a node override, the default network root is prepended to create a fully specified network pathname.

To run a command with a specific network root, use the on command, specifying the -f option:

on -f /net/percy command

This runs the given command with /net/percy as the network root; that is, it searches for the command — and any files with relative paths specified as arguments — on /net/percy and runs the command on /net/percy. In contrast, this:

on -n /net/percy command

searches for the given command — and any files with relative paths — on your local node and runs the command on /net/percy.

In a program, you can specify a network root when you call chroot().

This really isn't as complicated as it may seem. Most of the time, you don't specify a network root, and everything you do will simply work within your namespace (defined by your default network root). Most users will log in, accept the normal default network root (i.e., the namespace of their own node), and work within that environment.

In some traditional UNIX systems, the cd (change directory) command modifies the pathname given to it if that pathname contains symbolic links. As a result, the pathname of the new current working directory — which you can display with pwd — may differ from the one given to cd.

In QNX Neutrino, however, cd doesn't modify the pathname — aside from collapsing .. references. For example:

cd /usr/home/dan/test/../doc

would result in a current working directory of /usr/home/dan/doc, even if some of the elements in the pathname were symbolic links.

For more information about symbolic links and .. references, see “QNX 4 filesystem” in the Working with Filesystems chapter of the QNX Neutrino User's Guide.

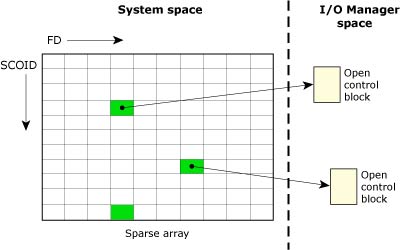

Once an I/O resource has been opened, a different namespace comes into play. The open() returns an integer referred to as a file descriptor (FD), which is used to direct all further I/O requests to that resource manager.

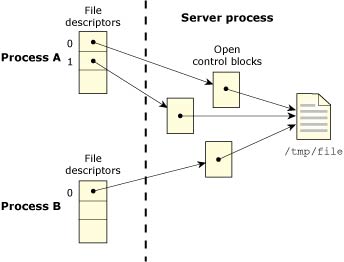

Unlike the pathname space, the file descriptor namespace is completely local to each process. The resource manager uses the combination of a SCOID (server connection ID) and FD (file descriptor/connection ID) to identify the control structure associated with the previous open() call. This structure is referred to as an open control block (OCB) and is contained within the resource manager.

The following diagram shows an I/O manager taking some SCOID, FD pairs and mapping them to OCBs.

The SCOID and FD map to an OCB of an I/O Manager.

The open control block (OCB) contains active information about the open resource. For example, the filesystem keeps the current seek point within the file here. Each open() creates a new OCB. Therefore, if a process opens the same file twice, any calls to lseek() using one FD will not affect the seek point of the other FD. The same is true for different processes opening the same file.

The following diagram shows two processes, in which one opens the same file twice, and the other opens it once. There are no shared FDs.

Two processes open the same file.

|

FDs are a process resource, not a thread resource. |

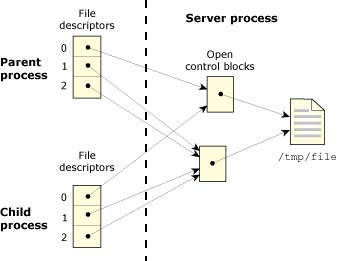

Several file descriptors in one or more processes can refer to the same OCB. This is accomplished by two means:

When several FDs refer to the same OCB, then any change in the state of the OCB is immediately seen by all processes that have file descriptors linked to the same OCB.

For example, if one process uses the lseek() function to change the position of the seek point, then reading or writing takes place from the new position no matter which linked file descriptor is used.

The following diagram shows two processes in which one opens a file twice, then does a dup() to get a third FD. The process then creates a child that inherits all open files.

A process opens a file twice.

You can prevent a file descriptor from being inherited when you posix_spawn(), spawn(), or exec*() by calling the fcntl() function and setting the FD_CLOEXEC flag.

![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |